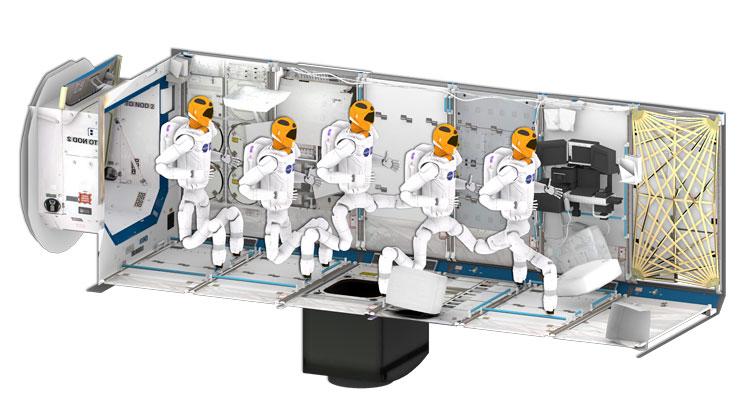

NASA recently announced the Robonaut is fixed and nearly ready to go back to the International Space Station. Julia Badger, the autonomous systems technology discipline lead at NASA, spoke about her collaboration with Lydia Kavraki, the Noah Harding Professor of Computer Science.

“We’re trying to be really specific about the types of tasks that we want the robot to be doing so that the robot can do those tasks without human intervention as much as possible,” Badger said in an IEEE Spectrum interview.

“We’ve been working with Lydia Kavraki’s group at Rice University to get the motion planning and path planning such that it can handle all of our 58 degrees of freedom, and it does a really good job on its own,” she said.

The Kavraki Lab developed a framework for motion planning for Robonaut 2. Motion planning is a core area of expertise of the Kavraki Lab and algorithms for a variety of robotic systems have been developed over the last 10 years. As a field of research, robot motion planning is about designing the theory and methods that enable a robot to figure out how to get from point A to point B without colliding with any object in its environment. Zak Kingston, Ph.D. student working in Kavraki’s lab, explains the application of the Lab’s research in space.

“The lab, in collaboration with the R2 team, has developed the motion planning system and theory necessary for a complicated, humanoid robot like R2 to do meaningful work, such as climbing across handrails, manipulating tools and bags, or operating hatches and mechanisms,” Kingston said.

“Imagine a robot like R2 up on the space station, and an operator at mission control wants to have R2 do something simple to help the astronauts out with their chores, like wipe down a handrail. If that operator doesn't have a motion planning system for R2, they have to manually specify the angle of every joint on the robot for every step in the path,” he said.

“That is an incredibly tedious job, and the robot would always need a human to tell it how to do everything. With motion planning, the operator could just clicks ‘go’ and the motion planning algorithm automatically generates that motion so in the future, R2 could automatically figures out how to do a task,” Kingston said.

Planning with motion constraints is the focus on Kingston’s research. If a robot needs to give a cup of coffee to a human, motion constraint ensures that the robot does not upend the cup and pours it on the floor.

“For example, R2 must always be grasping a handrail so it doesn't fly away and 500 pounds of robot are now floating in the station. Another common motion constraint example is when R2 is manipulating anything with both hands. The two hands must remain connected to the object R2 is manipulating at all times: R2 can't just let go whenever it wants,” Kingston said.

Additional smarts are required in a motion planner to understand how to satisfy motion constraints as the constraint limits what the robot can do. Broadly speaking, the more flexible the robot and the more the constraints, the more difficult the problem becomes.

“I've been working with the R2 team since 2016 to develop motion planning techniques that work for R2 and its constraints, as well as an interface that an operator can use to specify motion planning requests. I've also developed a framework for motion planning with constraints that unified much of the previous work in planning with constraints. The framework decouples how constraints are satisfied from how an algorithm plans motion, which enables a developer to mix-and-match methods to find the best tools for their particular problem,” Kingston said.

“For R2, this enables us to use planners designed to handle R2 and all its 58 degrees-of-freedom, while still being able to satisfy the constraints it needs satisfied to move. R2 can climb around on handrails, lift bags, open valves and hatches, and achieve whatever manipulation tasks it needs accomplished through this motion planning system. Additionally, the framework is open source in the Open Motion Plan Library (OMPL), a popular software library for robot motion planning that the Kavraki Lab maintains and develops. Other researchers can use the code for their own constrained planning problems,” he said.

“It is truly amazing to see Zak’s research work applied to such a complex system. When we started this project, we were overwhelmed. Now we can sit back and relax while Zak’s algorithms do the work. I am very impressed on how Zak has managed to pull disparate ideas under a common framework and deliver such impressive performance,” Kavraki said. “Dr. Mark Moll has also provided insightful guidance for this project,” Kavraki said.

“The use of robots in space applications is very much justified. Robots can help astronauts in long range missions, and they can act as caretakers of space habitats when humans are not around,” she said.

“I love knowing that I contributed meaningfully to a project with a scope as large as R2. It's really incredible seeing R2 move around using motions planned from my work. With R2 going back up to the space station, I'm really looking forward to seeing some motion planning in space, especially through my work,” Kingston said.

Cintia Listenbee, Communications and Marketing Specialist in Computer Science